10,000 HLS/RTMP Live Video Streaming Servers With Docker + Nginx

It used to be hours of tedious work, combined with expensive proprietary licenses for single-server software desperately straining a CDN. Video streaming was a pain. A real pain. If it wasn't the client CPU speed and bad codecs, it was the ISP bandwidth, or the server capacity.

If you want to spin up a service like Netflix or Youtube, it's easier because of the technologies available, but it's tougher because of the scale. To be honest, it's never been easy; the problem has merely moved location: from the pipe, to the server farm.

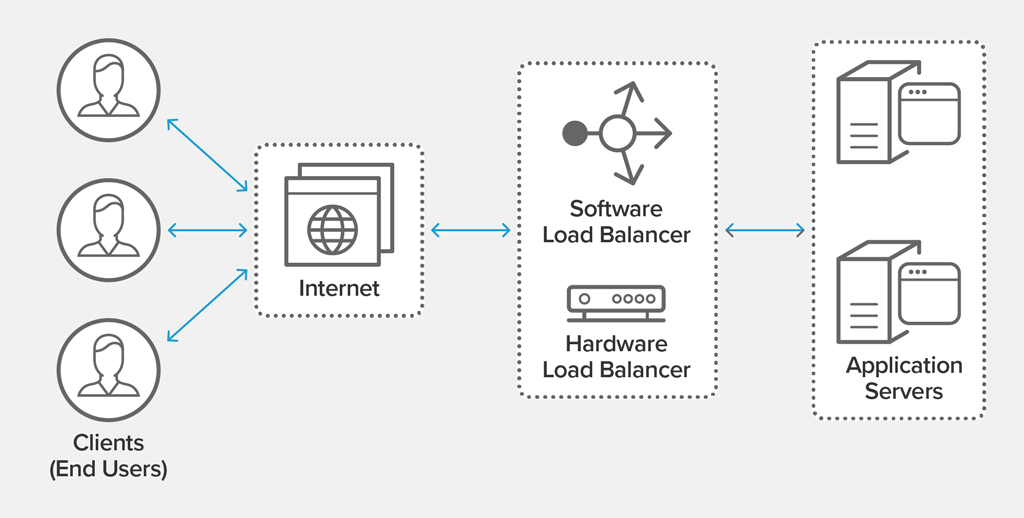

Which brings us onto our challenge. We all know the high-availability architecture for web applications using load balancers.

We all know how Peering (https://en.wikipedia.org/wiki/Peering) and CDNs (https://en.wikipedia.org/wiki/Content_delivery_network) work.

Let's say we need to create 10,000 video streaming servers which can read static files from their origin (with unlimited bandwidth), but also host live services which record to disk. It's an arbitrary number as usually only 5% of your users are on simultaneously.

How do we mutate to that from what we know about an HTTP web application?

First, some streaming history.

Back in the Days of the USSR

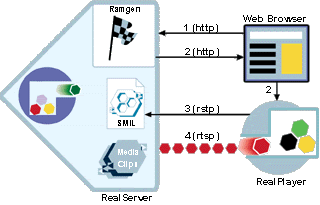

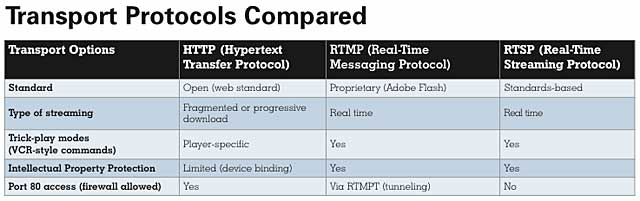

In the old 90s days of POTS modems, RealNetworks pioneered the Real-time Streaming Protocol (RTSP: more: https://en.wikipedia.org/wiki/Real_Time_Streaming_Protocol) as a specialist alternative to HTTP; then produced RealServer, which morphed into Helix Server (https://en.wikipedia.org/wiki/Helix_(multimedia_project).

The theory was fairly simple: it worked as stateful protocol on port 554 over TCP, and was a control layer on top of Real-time Transport Protocol (RTP) and Real-time Transport Control Protocol (RTCP). An example PLAY request looked like this:

C->S: PLAY rtsp://example.com/media.mp4 RTSP/1.0

CSeq: 4

Range: npt=5-20

Session: 12345678

S->C: RTSP/1.0 200 OK

CSeq: 4

Session: 12345678

RTP-Info: url=rtsp://example.com/media.mp4/streamid=0;seq=9810092;rtptime=3450012Specification: https://www.ietf.org/rfc/rfc2326.txt

Almost Gone In A Flash

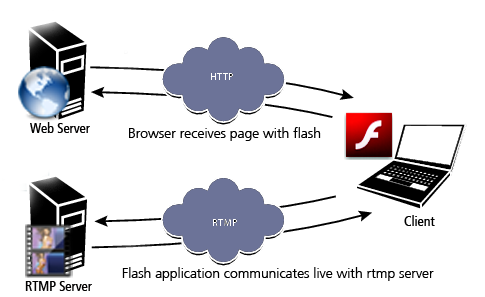

Then came the days of Flash. It wasn't long before the funky animation plugin transformed into a suite of products which offered functionality on the backend. 2002 bought along Macromedia's Real-time Messaging Protocol (RTMP: https://en.wikipedia.org/wiki/Real-Time_Messaging_Protocol) for audio, video, and data.

It was slightly similar to MPEG transport, with different channels to handle different data types, such as Flash Video and Action Message Format (AMF: https://en.wikipedia.org/wiki/Action_Message_Format) for binary data. It was open-sourced in 2012 by Adobe.

It eventually mutated into an SSL version (RTMPS), an encrypted DRM version (RTMPE), a tunnel version for NAT traversal (RTMPT), and a UDP variant (Real-time Media Flow Protocol, RTMFP).

Specification: http://wwwimages.adobe.com/content/dam/Adobe/en/devnet/rtmp/pdf/rtmp_specification_1.0.pdf

Around the same time, competitors and innovators sprung up. The best known were:

- Wowza Media Server: https://en.wikipedia.org/wiki/Wowza_Streaming_Engine

- Unreal Media Server: https://en.wikipedia.org/wiki/Unreal_Media_Server

- Red5 Media Server: https://en.wikipedia.org/wiki/Red5_(media_server)

Back to Good Old HTTP

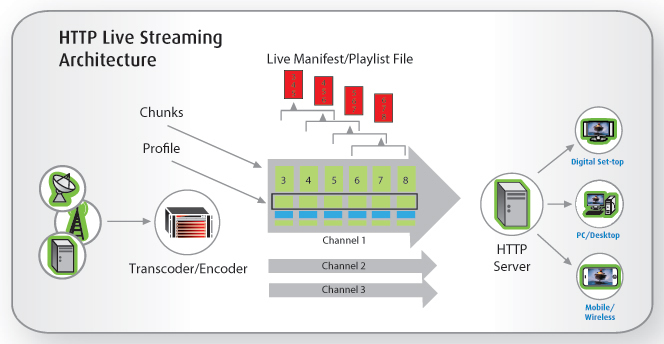

With the increase in available bandwidth, and increasingly effective compression algorithms (codecs), HTTP became viable again as a transport protocol for extremely large files. However, not all connections were equal, and HTTP is stateless.

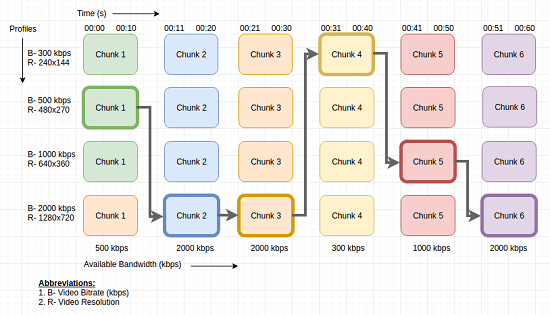

3 gorillas developed different technologies based on the idea of Adaptive Bitrate Streaming: https://en.wikipedia.org/wiki/Adaptive_bitrate_streaming

- MPEG Dynamic Adaptive Streaming over HTTP (DASH, 2011)

https://en.wikipedia.org/wiki/Dynamic_Adaptive_Streaming_over_HTTP - Adobe HTTP Dynamic Streaming (HDS, 2010-ish)

https://www.adobe.com/products/hds-dynamic-streaming.html - Apple HTTP Live Streaming (HLS, 2009)

https://en.wikipedia.org/wiki/HTTP_Live_Streaming - Microsoft Smooth Streaming (Silverlight, 2008)

https://docs.microsoft.com/en-us/iis/media/smooth-streaming/smooth-streaming-transport-protocol

The idea is split large video files into smaller segments which play in sequence. It's also pretty handy in stopping people downloading entire files.

And Now To Peer-to-Peer

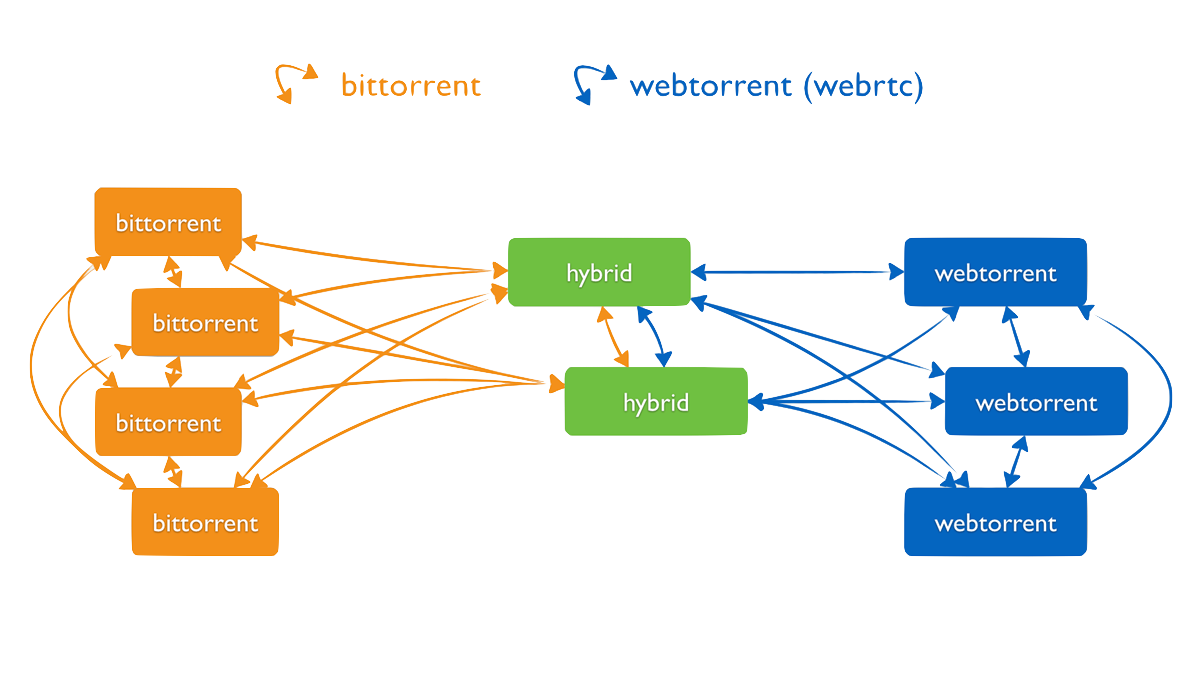

Little can be said about the genius of BitTorrent; or of inverting the bandwidth problem into a positive by making the stream faster according to the demand for it. Peer-to-Peer had been waiting the Napster/eMule days for an answer, and when it got it, they were out of business.

BitTorrent always had a problem though: it was slow to get going.

Then came WebTorrent: https://webtorrent.io/ and Peerflix (https://www.npmjs.com/package/peerflix-server, which used Torrent Stream: https://github.com/mafintosh/torrent-stream), which eventually produced Hollywood's worst nightmare: Popcorn Time: https://en.wikipedia.org/wiki/Popcorn_Time.

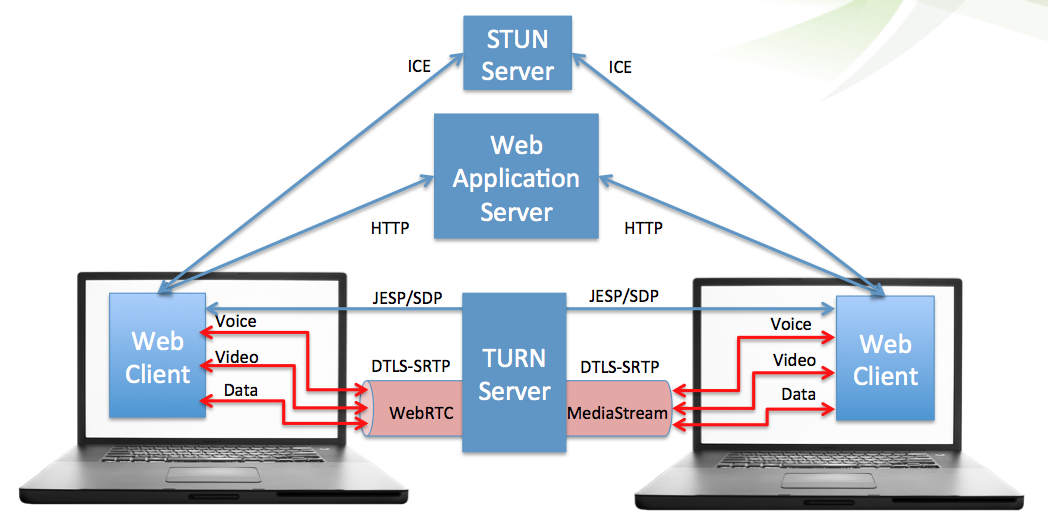

Underlying all these has come to be the Web Real-Time Communication (WebRTC) standard proposed by Ericsson / Google around 2011: https://en.wikipedia.org/wiki/WebRTC . It provides Peer-to-Peer connectivity inside the browser for audio, video, and data.

Used in conjunction with other emerging standards like:

- WebVR (https://en.wikipedia.org/wiki/WebVR)

- WebGL (https://en.wikipedia.org/wiki/WebGL)

- WebAssembly (https://en.wikipedia.org/wiki/WebAssembly)

- WebUSB (https://en.wikipedia.org/wiki/WebUSB)

... and it's clear the future of video streaming is right back in the browser, be it on the desktop, phone, or an embedded/headless variant.

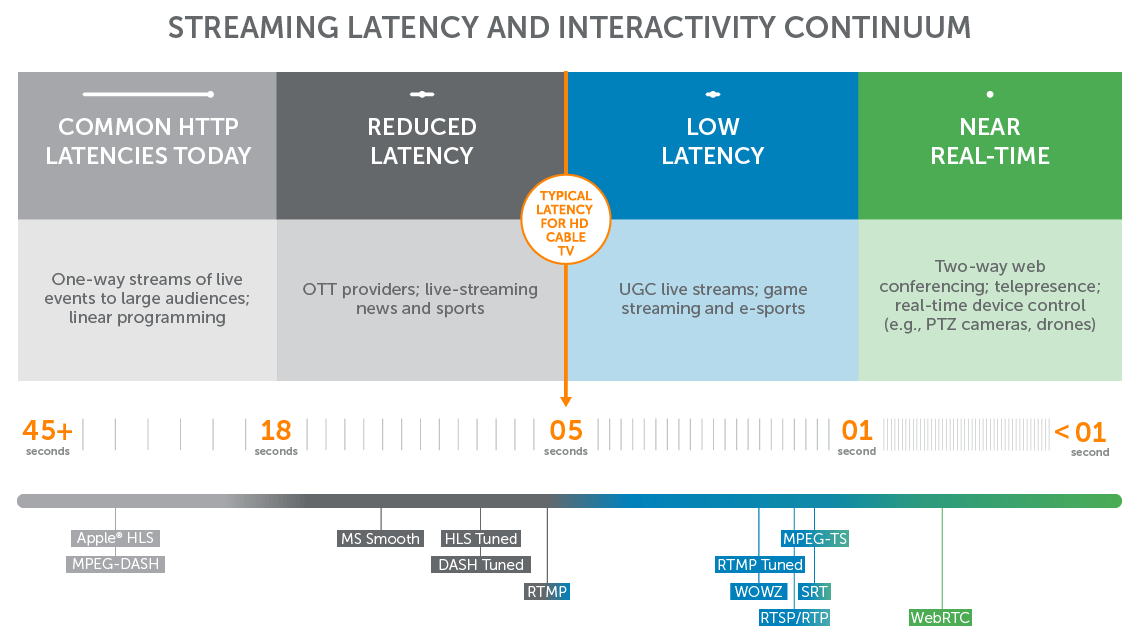

All in all, the history looks a bit like this:

Scaling Up: Containerising Absolutely Everything

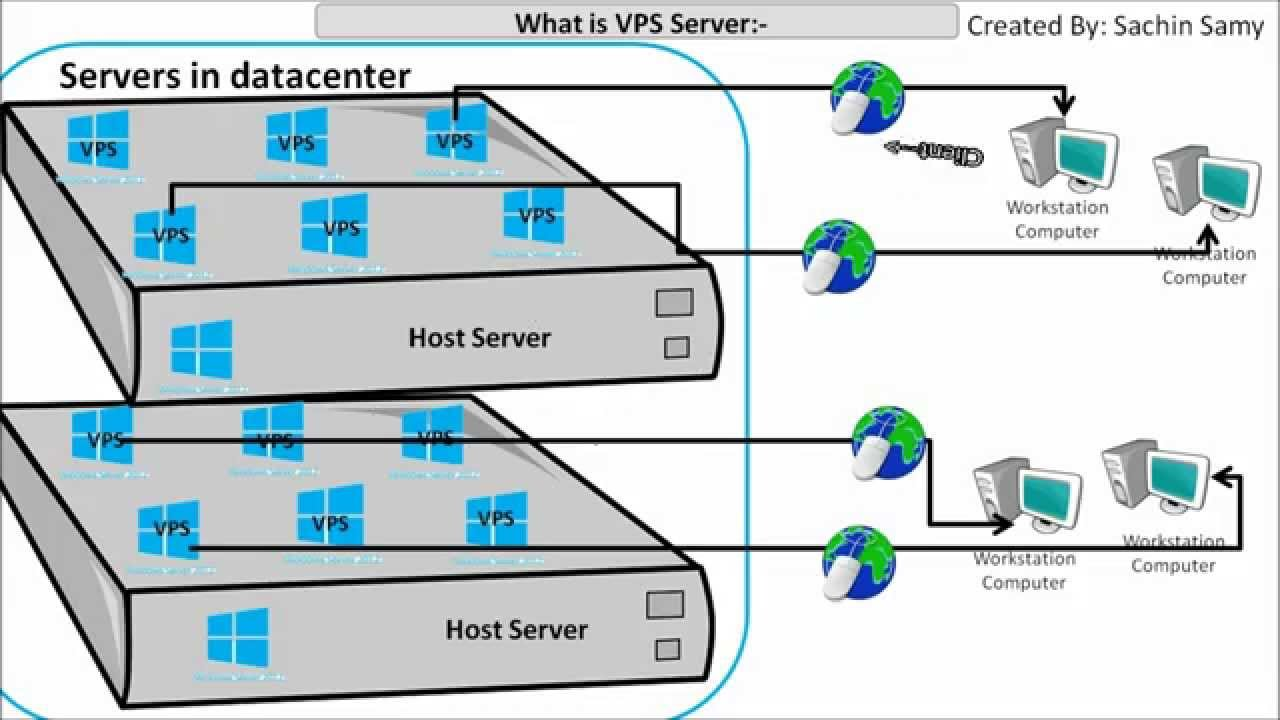

The latest fad in DevOps came from the Cloud practice of subdividing server hardware into potentially hundreds of Virtual servers (i.e. the VPS, Virtual Private Server: https://en.wikipedia.org/wiki/Virtual_private_server). It's a clever business move; instead of one client per server, you can simply provide Virtual Machines on the same box, rather than Virtual Hosts in Apache.

The 3 early players in the Virtualisation Management space were:

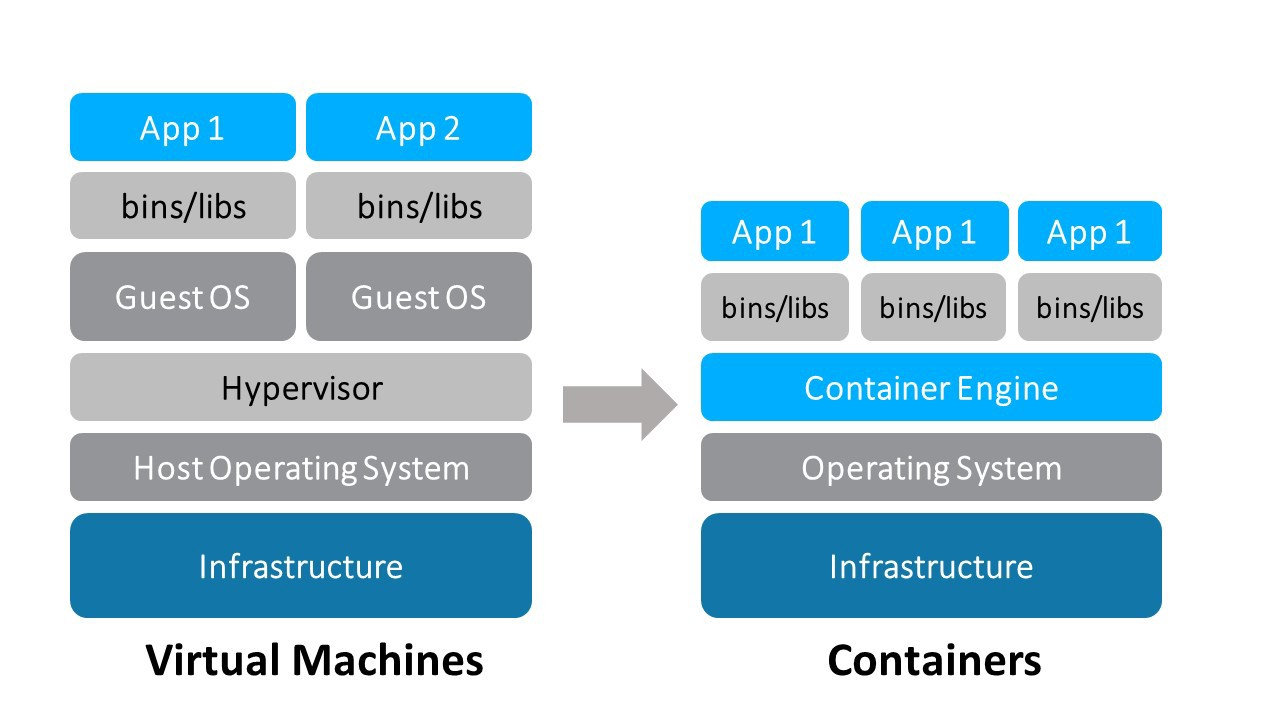

The trouble with VMs is they reserve an enormous amount of RAM and CPU for themselves. Enter Docker from a hackathon around 2013: https://en.wikipedia.org/wiki/Docker_(software)

Docker introduced the idea of Containers.

"A Docker container image is a lightweight, standalone, executable package of software that includes everything needed to run an application: code, runtime, system tools, system libraries and settings."

https://www.docker.com/resources/what-container

Because Docker containers are lightweight, a single server or virtual machine can run several containers simultaneously. So we've subdivided VMs once again, and each container uses the same machine (OS) hardware.

The bottom line is you can run around 1000 containers within a single Linux OS instance before you run into networking/context-switching issues, and RAM problems.

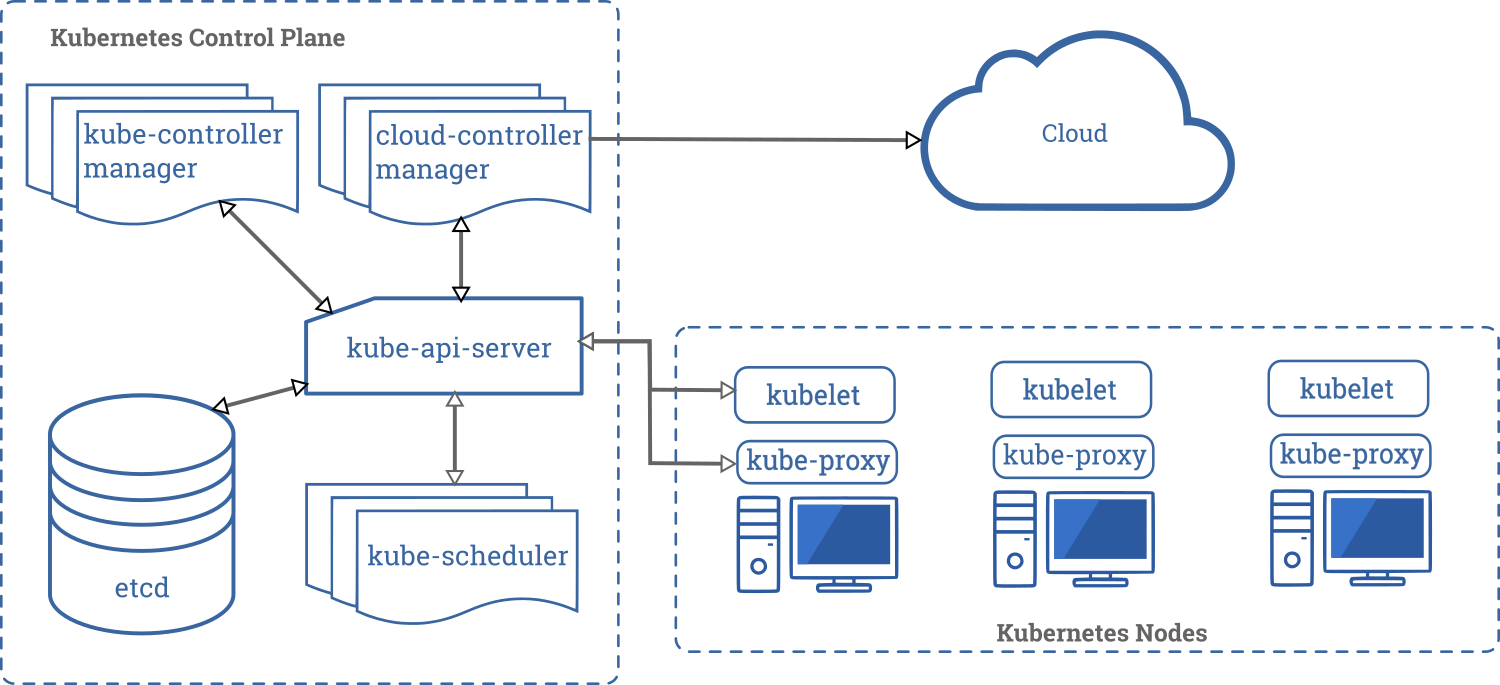

Next up came platform management software for containers ("orchestration') , of which the most well-known is Google's Kubernetes (https://en.wikipedia.org/wiki/Kubernetes), which was developed off their Star Trek-inspired "Borg" system.

Dockerfiles are simple text recipes for containers. For example, Nginx:

#

# Nginx Dockerfile

#

# https://github.com/dockerfile/nginx

#

# Pull base image.

FROM dockerfile/ubuntu

# Install Nginx.

RUN \

add-apt-repository -y ppa:nginx/stable && \

apt-get update && \

apt-get install -y nginx && \

rm -rf /var/lib/apt/lists/* && \

echo "\ndaemon off;" >> /etc/nginx/nginx.conf && \

chown -R www-data:www-data /var/lib/nginx

# Define mountable directories.

VOLUME ["/etc/nginx/sites-enabled", "/etc/nginx/certs", "/etc/nginx/conf.d", "/var/log/nginx", "/var/www/html"]

# Define working directory.

WORKDIR /etc/nginx

# Define default command.

CMD ["nginx"]

# Expose ports.

EXPOSE 80

EXPOSE 443Nginx: Our Secret Video Weapon

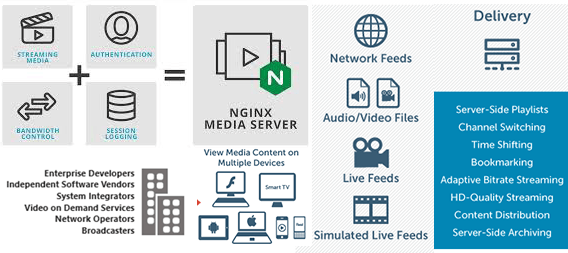

Which brings us neatly onto the HTTP issue. Nginx, obviously, is the new de facto standard web server for applications, and virtually anything which needs authenticated SSL termination/proxying (e.g. Elasticsearch, etc).

What is lesser known about Nginx is it is entirely capable of HTTP video streaming (MPEG-DASH, HLS, RTMP) via it's RTMP module (libnginx-mod-rtmp):

- https://packages.debian.org/sid/libnginx-mod-rtmp

- https://www.nginx.com/products/nginx/modules/rtmp-media-streaming/

- https://docs.nginx.com/nginx/admin-guide/dynamic-modules/rtmp/

- https://nginx-rtmp.blogspot.com/

- https://github.com/sergey-dryabzhinsky/nginx-rtmp-module

It can push, pull, stream, and record.

Adding a live mount-point for an RTMP stream is trivial:

rtmp {

server {

listen 1935;

chunk_size 4096;

application amazeballs {

live on;

meta copy;

record all;

record_path /var/www/html/recordings;

record_unique on;

}

}

}An encoder pushes to rtmp://0.0.0.0/amazeballs and a video viewer client (e.g. VLC) simply connects to rtmp://0.0.0.0/amazeballs/somesecretkeyyouset to watch.

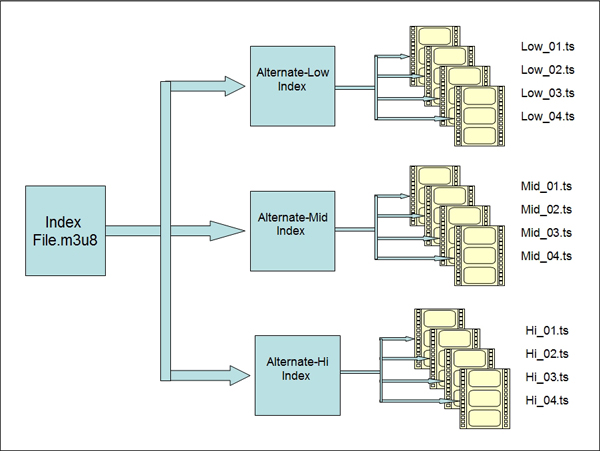

Setting up HLS is just as easy with the hls setting:

types {

application/dash+xml mpd;

application/vnd.apple.mpegurl m3u8;

video/mp2t ts;

}

rtmp {

server {

listen 1935;

chunk_size 4000;

application show {

live on;

hls on;

hls_path /path/to/disk;

hls_fragment 3;

hls_playlist_length 60;

hls_nested on;

hls_variant _low BANDWIDTH=640000;

hls_variant _hi BANDWIDTH=2140000;

# disable consuming the stream from nginx as rtmp

deny play all;

exec_static /usr/local/bin/ffmpeg -i SOURCE -c:v libx264 -g 50 -preset fast -b:v 4096k -c:a libfdk_aac -ar 44100 -flv rtmp://127.0.0.1/media_server/stream_hi -c:v libx264 -g 50 -preset fast -b:v 1024k -c:a libfdk_aac -ar 44100 -flv rtmp://127.0.0.1/media_server/stream_low;

}

}

}A video client viewer connects the same way, but over HTTP, e.g. http://{server_address}/hlsballs/{secret_key}.m3u8

Or we could pull an existing stream to repeat it:

application amazeballs-repeater {

live on;

pull rtmp://cdn.com:1234/movies/some-endpoint live=1 name=blah;

}Maybe that's a webcam (/dev/video0) or video file we're doing something inventive to with FFMPEG:

ffmpeg -re -f video4linux2 -i /dev/video0 -vcodec libx264 -vprofile baseline -acodec aac -strict -2 -f flv rtmp://localhost/amazeballs/streamAlternatively, we could push to another cloud service, like Twitch:

application amazeballs-repeater {

live on;

push rtmp://live-point.twitch.tv/app/{stream_key};

}

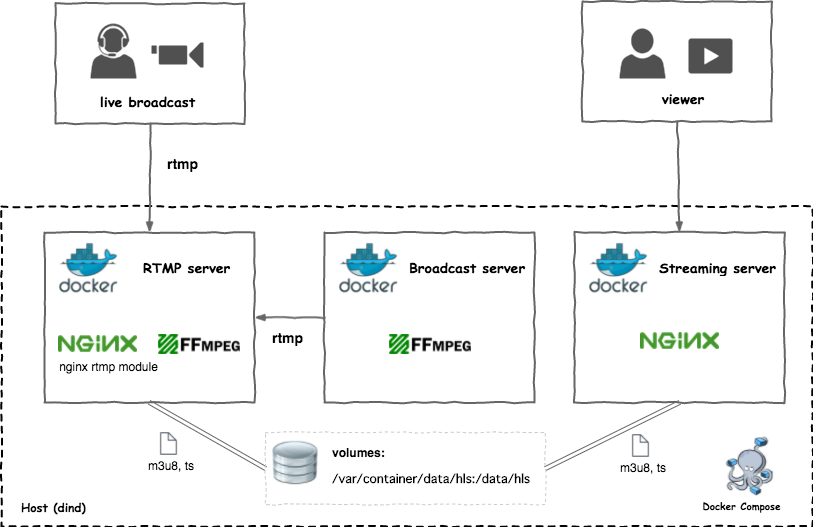

Putting It All Together

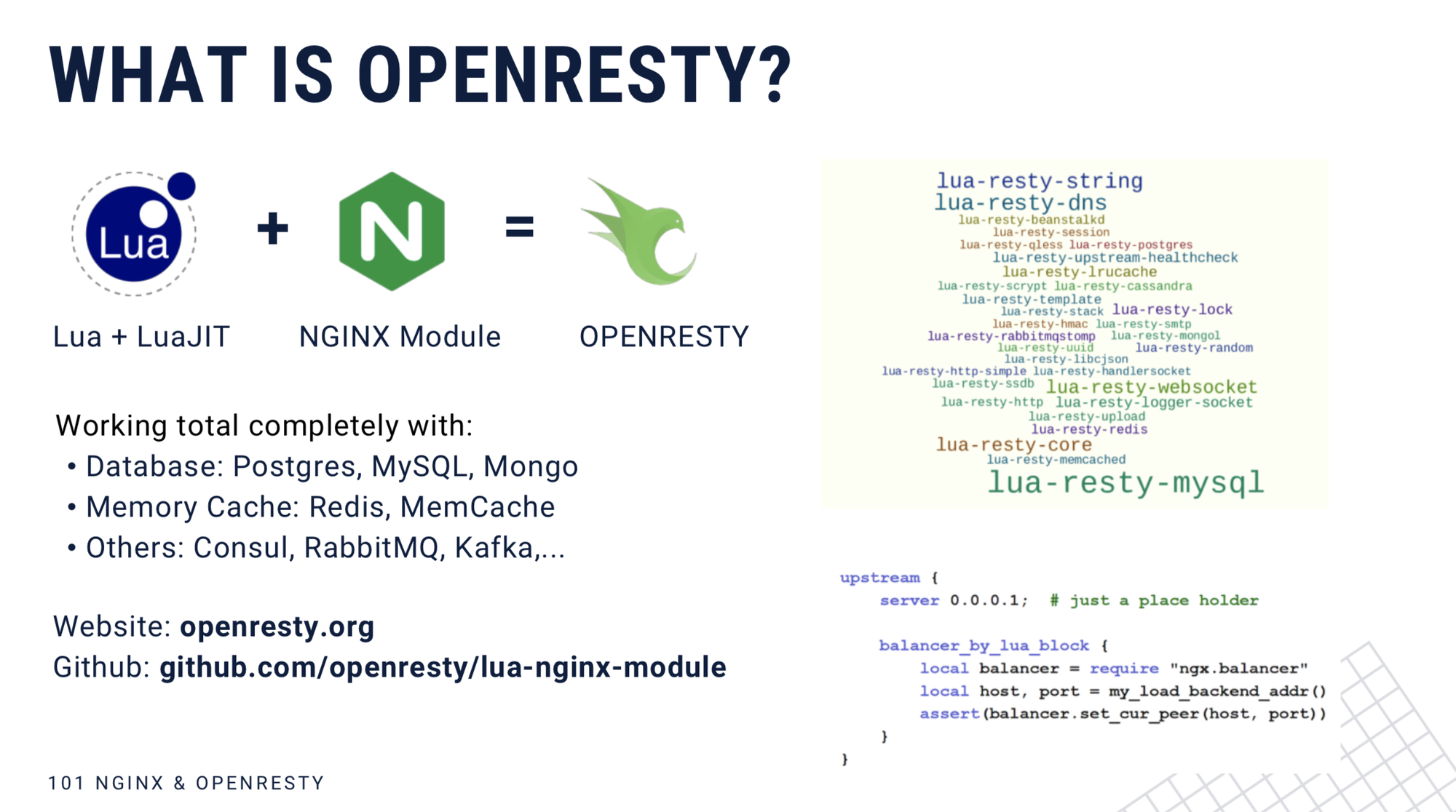

Our web application servers are now fully capable video servers which can be programmably spawned into a mesh. For the Lua fans, or the brave, you can go even further with Nginx and start with OpenResty: https://openresty.org/en/.

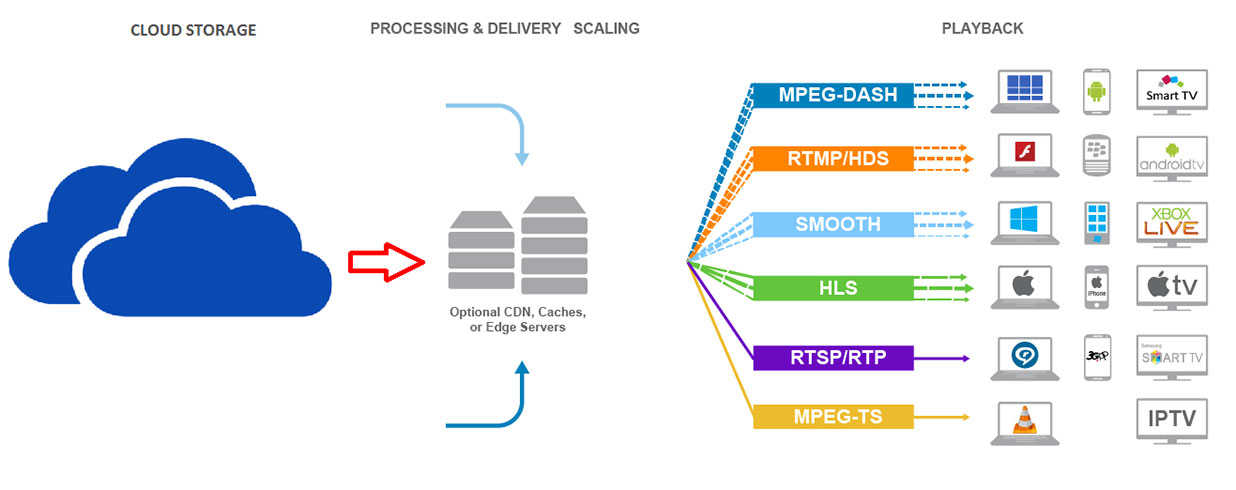

One thing remains: the disk they read/write from. It's pointless to save to local machines if you need to scale.

An example for this one is to use a shared cloud drive, like EBS or S3. For that, we can adjust our Dockerfile to mount an S3 drive using something like S3FS-FUSE: https://github.com/s3fs-fuse/s3fs-fuse

Example Dockerfile:

When all our containers work from a shared cloud drive, we're in business. As for containerising RTMP configurations, there are a good few examples:

Example Nginx RTMP Docker containers:

- https://github.com/alfg/docker-nginx-rtmp

- https://github.com/brocaar/nginx-rtmp-dockerfile

- https://github.com/tiangolo/nginx-rtmp-docker

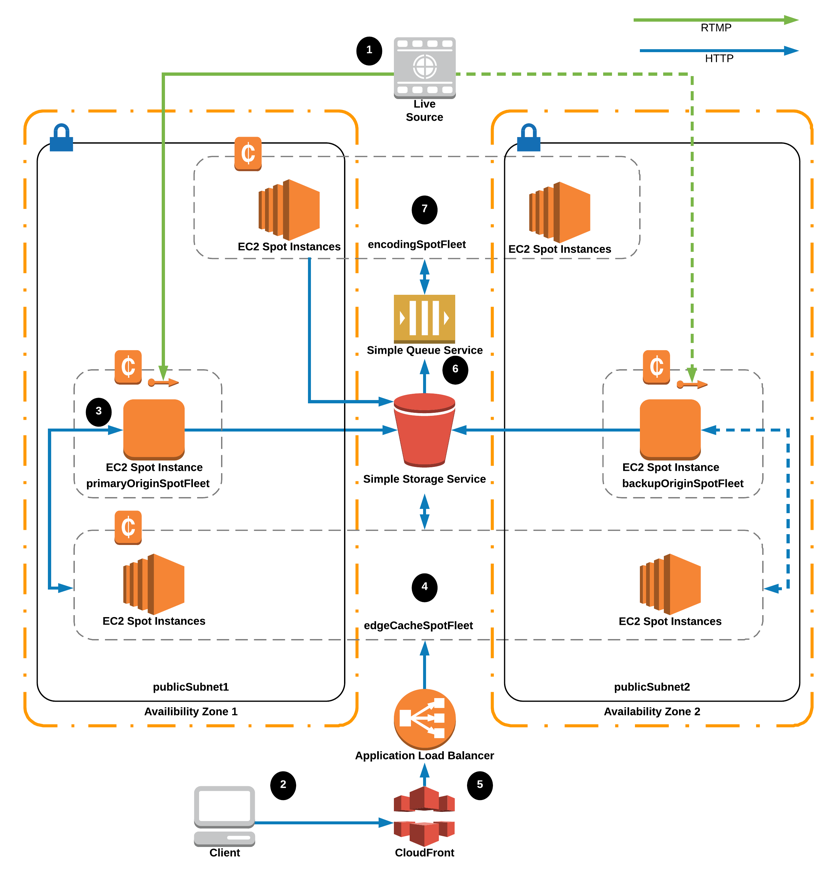

Then we have to get to this:

Eh Voila! Our deployment overview:

- Set up read/write cloud HDD;

- Create a Docker container image with Nginx RTMP, mounted cloud drive, FFMPEG goodies etc.

- Spawn/orchestrate as many containers as required using Kubernetes;

- Configure load-balancer to cycle to our containers;

- ????

- PROFIT!!! (for the uninitiated: https://knowyourmeme.com/memes/profit)

More: https://www.slideshare.net/Nginx/video-streaming-with-nginx

Next, of course, is live VR streaming, which, according to AWS, looks a bit like this: